However, in studies where providing ratings is costly and/or time-intensive, selecting a subset of subjects for IRR analysis may be more practical because it requires fewer overall ratings to be made, and the IRR for the subset of subjects may be used to generalize to the full sample. In general, rating all subjects is acceptable at the theoretical level for most study designs. The contrast between these two options is depicted in the left and right columns of Table 1. These design issues are introduced here, and their impact on computation and interpretation are discussed more thoroughly in the computation sections below.įirst, it must be decided whether a coding study is designed such that all subjects in a study are rated by multiple coders, or if a subset of subjects are rated by multiple coders with the remainder coded by single coders. For example, an instrument may have good IRR but poor validity if coders’ scores are highly similar and have a large shared variance but the instrument does not properly represent the construct it is intended to measure.īefore a study utilizing behavioral observations is conducted, several design-related considerations must be decided a priori that impact how IRR will be assessed.

Instruments may have varying levels of validity regardless of the IRR of the instrument. IRR analysis is distinct from validity analysis, which assesses how closely an instrument measures an actual construct rather than how well coders provide similar ratings. Instead, true scores can be estimated by quantifying the covariance among sets of observed scores ( X) provided by different coders for the same set of subjects, where it is assumed that the shared variance between ratings approximates the value of Var ( T) and the unshared variance between ratings approximates Var ( E), which allows reliability to be estimated in accordance with equation 3. IRR analysis aims to determine how much of the variance in the observed scores is due to variance in the true scores after the variance due to measurement error between coders has been removed ( Novick, 1966), such thatįor example, an IRR estimate of 0.80 would indicate that 80% of the observed variance is due to true score variance or similarity in ratings between coders, and 20% is due to error variance or differences in ratings between coders.īecause true scores ( T) and measurement errors ( E) cannot be directly accessed, the IRR of an instrument cannot be directly computed. Each of these issues may adversely affect reliability, and the latter of these issues is the focus of the current paper. For example, measurement error may be introduced by imprecision, inaccuracy, or poor scaling of the items within an instrument (i.e., issues of internal consistency) instability of the measuring instrument in measuring the same subject over time (i.e., issues of test-retest reliability) and instability of the measuring instrument when measurements are made between coders (i.e., issues of IRR). Measurement error ( E) prevents one from being able to observe a subject’s true score directly, and may be introduced by several factors.

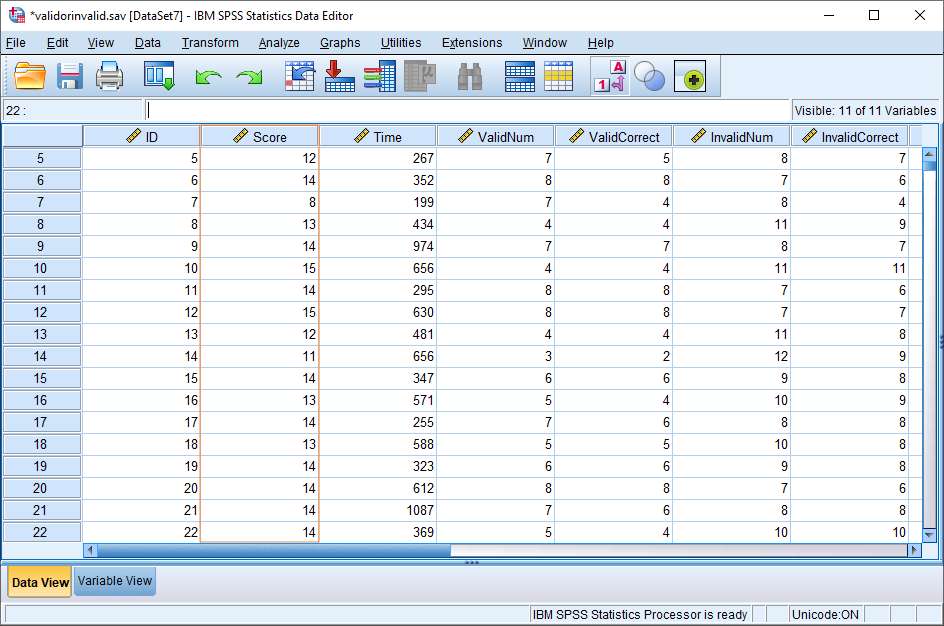

SPSS CODE FOR CLASS PLUS

Where the variance of the observed scores is equal to the variance of the true scores plus the variance of the measurement error, if the assumption that the true scores and errors are uncorrelated is met.

Although it is beyond the scope of the current paper to provide a comprehensive review of the many IRR statistics that are available, references will be provided to other IRR statistics suitable for designs not covered in this tutorial. Computational examples include SPSS and R syntax for computing Cohen’s kappa for nominal variables and intra-class correlations (ICCs) for ordinal, interval, and ratio variables. This paper will provide an overview of methodological issues related to the assessment of IRR, including aspects of study design, selection and computation of appropriate IRR statistics, and interpreting and reporting results. However, many studies use incorrect statistical analyses to compute IRR, misinterpret the results from IRR analyses, or fail to consider the implications that IRR estimates have on statistical power for subsequent analyses. The assessment of inter-rater reliability (IRR, also called inter-rater agreement) is often necessary for research designs where data are collected through ratings provided by trained or untrained coders.